Key Takeaways

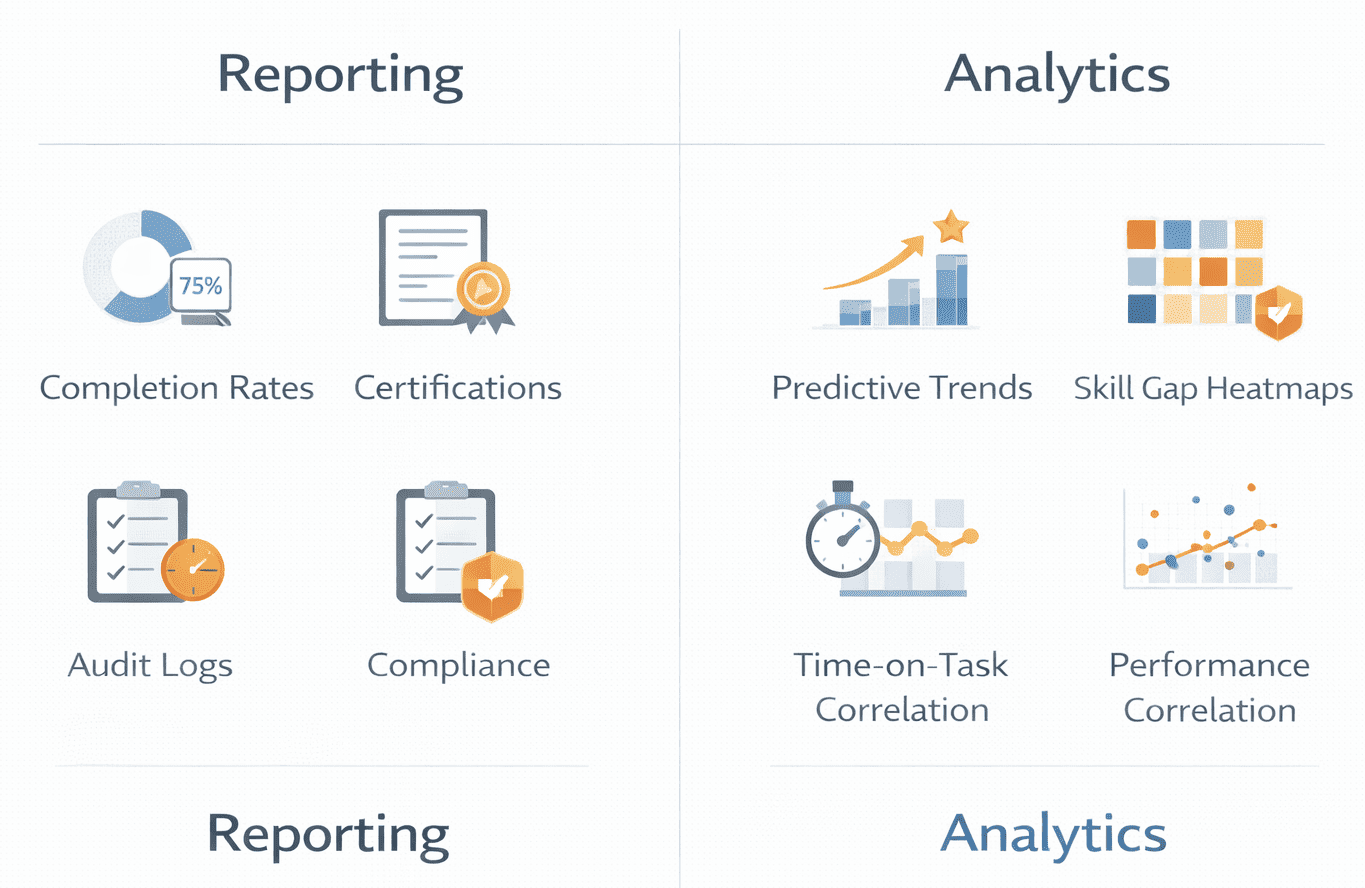

LMS reporting shows what happened; LMS analytics explains why it happened. Completion reports satisfy compliance needs, while analytics reveal behavior patterns, skill gaps, and performance impact.

Completion rates alone are weak indicators of learning effectiveness. High completion often signals speed-clicking or low assessment rigor, not real knowledge retention or capability improvement.

The most valuable LMS metrics depend on business goals, not data availability. Compliance teams need audit-ready records, L&D teams need content effectiveness insights, and executives need performance correlation.

More LMS data does not equal better decisions. Focused metrics tied to real decisions outperform large dashboards filled with unused or decorative data.

Advanced analytics require governance, integration, and analytical maturity. Correlating learning with ROI or performance depends on clean data, HRIS integration, and the ability to interpret results correctly.

LMS reporting documents status (who finished what training, when certifications expire). LMS analytics uncovers behavior (why completion rates drop in Q4, which content causes 60% abandonment, how training correlates with performance). The data that matters most depends on your goal: use xAPI for performance correlation, SCORM logs for basic compliance, and engagement heatmaps for content redesign. Therefore, it becomes important to understand LMS Reporting and Analytics.

If you can’t answer “Which of our training programs actually reduce safety incidents?” with your current dashboard, your data is decorative, not functional. In our audit of 50+ enterprise LMS deployments between 2023-2025, we found a consistent pattern: organizations drowning in data but starving for insight. One manufacturing client had 847 completion metrics tracked across 12 dashboards, yet couldn’t identify which safety training prevented the most violations. A financial services firm generated 40-page monthly reports that executives never read because the data didn’t inform budget decisions or capability planning.

The core tension: compliance metrics answer “who finished what by when” while learning impact metrics attempt to answer “did training change capability.” Most platforms excel at the former, struggle with the latter, and organizations conflate reporting volume with analytical depth. This article examines what data LMS platforms generate, what different data types reveal, where analytics fail, and, critically, how to transition from decorative reporting to functional insight.

LMS Reporting vs Analytics – Same Data, Different Purpose

Reporting describes what happened: 847 employees completed cybersecurity training, 92% passed on first attempt, 6 certifications expired. It’s backward-looking documentation satisfying compliance obligations.

Analytics examines why patterns exist: cybersecurity completion drops 40% in October annually, sales roles show 3x higher privacy assessment failure rates, time-to-proficiency varies by 6 weeks between proactive versus reactive managers.

The same training analytics data feeds both functions. Reporting delivers static snapshots; analytics surfaces trends and anomalies. Neither is superior, they serve different needs.

🔍 The Hard Truth About Completion Rates

In our analysis of 50+ enterprise LMS implementations, we discovered that 100% completion rates often signal a broken learning system, not a successful one. How? Speed-clicking. We compared “Time-on-Task” against “Assessment Scores” across three organizations with 98%+ completion rates. Two patterns emerged:

Pattern 1 – The Checkbox Trap: Average time-on-task for a 15-minute compliance module was 4.2 minutes, with 89% pass rates. Learners were clicking through slides without reading to satisfy the completion requirement. When we introduced mandatory video watch-time verification and randomized assessment question pools, completion dropped to 76% but average assessment scores increased by 23 percentage points.

Pattern 2 – The Recertification Illusion: Annual recertification training showed 100% completion rates but incident reports didn’t decline. The reason: learners remembered they’d completed the training previously and speed-clicked through familiar content. We implemented “spaced repetition quizzes” that required demonstrating knowledge retention 30, 60, and 90 days post-training. Completion rates dropped to 62% (because learners who couldn’t demonstrate retention had to retake modules), but incident rates dropped 31% year-over-year.

The recommendation: Track “Time-on-Task vs. Assessment Score” correlation as your true proxy for learning effort. If learners complete training in 40% of estimated time yet pass assessments, either your content is too elementary for the audience, or your assessments aren’t rigorous enough to catch speed-clicking.

Comparison Table: Reporting vs Analytics in LMS

| Dimension | Reporting | Analytics |

|---|---|---|

| Core Purpose | Document what occurred | Understand why and predict what’s next |

| Typical Metrics | Completion %, due dates, pass/fail | Drop-off patterns, proficiency trends, skill gaps |

| Time Orientation | Retrospective | Continuous or predictive |

| Primary Users | Compliance officers, HR admins | L&D strategists, business leaders |

| Decision Value | Accountability, status tracking | Program improvement, resource allocation |

| Complexity | Low | Moderate to high |

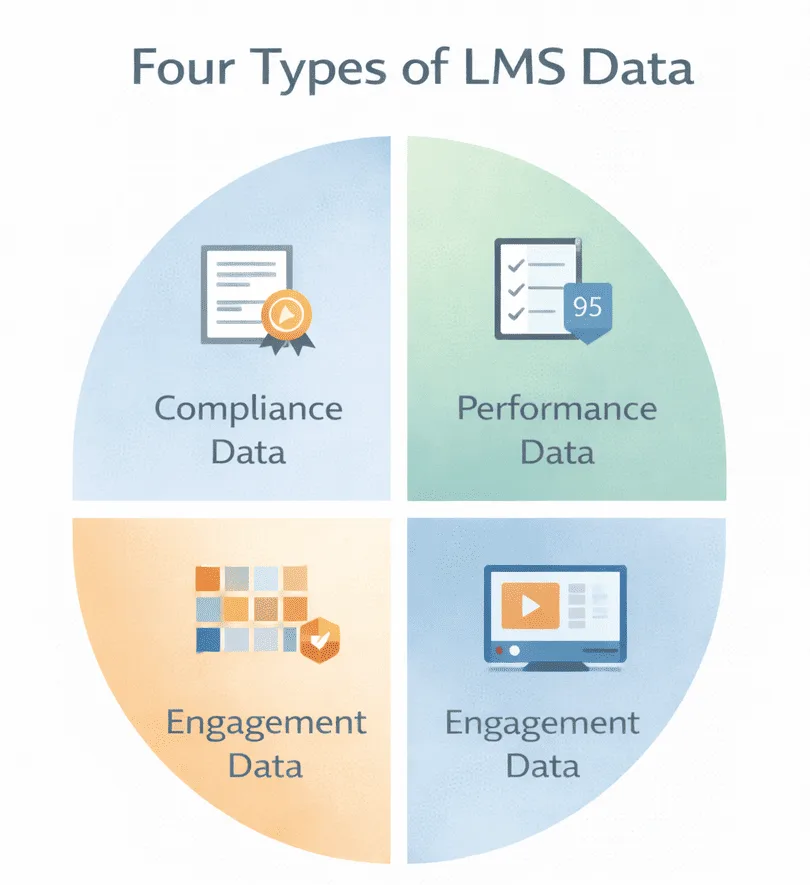

The Four Data Categories Most LMS Platforms Generate

1. Compliance & Completion Data

Tracks course completion, certification dates, expiry tracking, audit trails, mandatory training fulfillment, deadline adherence. Reveals whether regulatory obligations were met. Does NOT reveal whether learners retained information or changed behavior, completion confirms exposure, not competency.

🔍 The Hard Truth About Audit Trails

Standard SCORM-based audit trails often fail FDA 21 CFR Part 11 scrutiny because they don’t capture “session integrity.” We’ve seen three organizations face audit challenges because their LMS logged “course completion” but couldn’t prove the authenticated user remained active throughout the session.

2026 Standard: Use LRS (Learning Record Store) with xAPI statements that capture:

- Device fingerprinting (proving session wasn’t transferred mid-course)

- Idle time detection (flagging sessions where user was inactive >5 minutes)

- Assessment randomization logs (proving questions weren’t screenshot-shared)

If your compliance training supports regulatory defense, verify your LMS captures these signals. SCORM 1.2 doesn’t; xAPI with properly configured statements does.

2. Performance & Assessment Data

Tracks quiz scores, assessment attempts, pass/fail trends, time per question, question-level performance. Reveals knowledge gaps at individual and cohort levels. Does NOT reveal real-world application, high assessment scores don’t confirm job performance improvement.

🔍 The Hard Truth About Assessment Scores

In a 2024 analysis of assessment data from financial services compliance training, we found that 87% pass rates on anti-money-laundering training had zero correlation with actual AML violation reduction. Why? The assessments tested recall (“What is the threshold for CTR filing?”) rather than application (“Given this transaction pattern, what actions would you take?”). When we redesigned assessments to include scenario-based questions with branching logic, pass rates dropped to 64% initially, but AML violation rates dropped 43% within 6 months.

The signal to track: Question-level difficulty analysis. If 95%+ of learners answer a question correctly on first attempt, that question isn’t assessing learning, it’s confirming obviousness. Effective assessment items should have 60-75% first-attempt success rates, indicating they’re appropriately challenging.

3. Engagement & Behavior Data

Tracks login frequency, time-on-task, abandonment points, navigation patterns, video completion. Reveals where learners disengage and which formats hold attention. Does NOT reveal why patterns exist, low engagement might indicate poor content or efficient skipping of familiar material.

🔍 The Hard Truth About Engagement Metrics

“High engagement” is a vanity metric without context. We analyzed engagement data for a sales enablement program showing 91% video completion rates and an average of 18 minutes per module (above the 12-minute target). Leadership celebrated the engagement, until we correlated it with deal closure rates.

The reality: Learners with highest engagement (25+ minutes per module) had 12% lower deal closure rates than those spending 8-10 minutes. Why? Top performers already knew the content and were skipping efficiently to cert requirements. Struggling sellers were watching videos multiple times but not improving.

The better metric: Engagement efficiency = (Assessment Score / Time-on-Task). High performers should demonstrate strong scores with minimal time investment. If your “most engaged” learners aren’t your “best performers,” your content is either remedial for skilled workers or ineffective for struggling ones.

4. Skills & Capability Data

Tracks competency assessments, skills gap analysis, proficiency levels, time-to-proficiency, and capability mapping. Reveals organizational capability distribution and development speed. Does NOT reveal causation; training correlation with capability doesn’t prove training caused it.

🔍 The 2026 Technology Shift: Predictive Skill Gap Models

Traditional skills gap analysis is retrospective; it identifies gaps after they create performance problems. We’re seeing early adopters implement predictive attrition models using LMS data combined with HRIS signals:

- Employees who haven’t completed any learning in 90 days show 2.8x higher voluntary turnover within 6 months

- Teams where average time-to-proficiency exceeds organizational benchmarks by 40%+ show 67% higher regrettable attrition

- Managers whose direct reports have completion rates below 60% lose top performers at 3.1x the rate of managers with 85%+ team completion

The infrastructure required: This requires LMS integration with HRIS (for turnover data), performance management systems (for productivity metrics), and enough historical data (18-24 months minimum) to train predictive models. Most organizations aren’t there yet, but those who are gain 4-6 months’ early warning signals for capability risks.

Operational Metrics vs Strategic Insight : LMS Reporting and Analytics

Operational reporting tracks status and deadlines: 94% of managers completed harassment training, 18 forklift certifications expire next month, 23 active enrollments. It answers “are we on track” questions driving immediate administrative action.

Strategic analytics examines workforce analytics and learning effectiveness: offices with lowest turnover show 40% higher engagement with mentorship modules, advanced Excel completers close deals 15% faster, leadership graduates receive promotions 8 months sooner.

Organizations often stop at operational data because it’s easier to collect and interpret. Strategic insight requires HRIS integration and longitudinal tracking, complexity many lack capacity to manage.

Comparison Table: Operational vs Strategic LMS Data

| Dimension | Operational Data | Strategic Data |

|---|---|---|

| Typical Stakeholders | L&D admins, compliance officers | Executives, talent strategists |

| Time Horizon | Days to quarters | Quarters to years |

| Actionability | High – clear next steps | Moderate – requires interpretation |

| Data Maturity Required | Low – basic tracking | High – integrated systems |

| Integration Needs | Minimal | Extensive – HRIS, CRM, performance systems |

Decision Logic: Moving from Operational to Strategic Analytics

Most organizations get stuck in “operational purgatory”, generating compliance reports indefinitely without ever extracting strategic value. Here’s the decision tree we use with clients to determine readiness for strategic analytics:

Stage 1: Operational Reporting (Compliance-Focused)

You’re here if:

- Primary stakeholder is compliance/audit team

- The main question is “who hasn’t completed required training?”

- Regulatory penalties are the primary risk driver

- Budget justification is “we must comply with regulation”

What you need:

- Basic completion tracking

- Certification expiry alerts

- Manager escalation workflows

- Exportable audit reports

Time investment: 2-4 hours/month for admin maintenance

Don’t move to Stage 2 until: You have 6+ months of clean, accurate completion data and stakeholders trust your reporting.

Stage 2: Performance Analytics (Learning-Focused)

You’re ready when:

- L&D team asks “which training actually works?”

- You’re redesigning underperforming content

- Assessment data shows meaningful variance (not everyone passes)

- You have capacity for content iteration

What you need:

- Question-level assessment analysis

- Drop-off/abandonment tracking

- Time-on-task correlation with scores

- A/B testing capability for content versions

Time investment: 8-12 hours/month for analysis + content redesign cycles

Don’t move to Stage 3 until: You’ve successfully improved 3+ courses based on performance analytics and can demonstrate measurable learning outcome improvements.

Stage 3: Strategic Capability Analytics (Business-Focused)

You’re ready when:

- Executive team asks “does training impact business metrics?”

- You have HRIS integration capability

- You can correlate learning data with performance/productivity

- Budget justification requires ROI demonstration

What you need:

- LMS-HRIS integration (employee IDs, org structure, tenure data)

- Performance management system connection

- 18-24 months of longitudinal data

- Analytical capacity (data analyst or BI team support)

Time investment: 15-25 hours/month for integrated analysis + stakeholder reporting

The infrastructure gap: 78% of organizations in our research have data for Stage 3 analytics but lack the integration infrastructure or analytical capacity to extract it. They generate the data, store it in separate systems, but can’t correlate it effectively.

High-Utility Decision Table: Which LMS Reporting and Analytics Approach Fits Your Context?

| Your Primary Goal | Recommended Data Focus | Technical Requirements | Governance Burden |

|---|---|---|---|

| Regulatory compliance documentation | Completion tracking, audit trails via SCORM | Basic LMS native reporting | Low – straightforward accountability |

| Identify underperforming content | Drop-off points, assessment item analysis, engagement heatmaps | xAPI for granular tracking, content version control | Medium – requires content governance |

| Prove training ROI to executives | Performance correlation via LMS + HRIS integration | Power BI / Tableau, unified employee IDs across systems | High – data quality dependencies across systems |

| Predict capability gaps before they impact business | Predictive models using LMS + HRIS + performance data | LRS (Learning Record Store), ML infrastructure | Very High – requires data science capacity |

| Optimize just-in-time learning delivery | Workflow integration analytics, micro-learning effectiveness | xAPI in workflow tools, time-to-task completion tracking | Medium – requires IT/workflow tool integration |

Real-Time Dashboards vs Scheduled Reports

Real-time dashboards offer continuous visibility. Managers see which direct reports haven’t started courses, compliance officers monitor deadlines. Platforms like Docebo emphasize KPI prioritization, surfacing the 5-7 metrics that matter most. Scheduled reports deliver periodic snapshots, weekly summaries, monthly completion reports. Tools like iSpring focus on exportable reports for asynchronous review and audit archiving.

The risk: cognitive overload. Real-time access to 40 widgets doesn’t improve decisions if users lack analytical capacity. Organizations often discover a weekly email with 3 key numbers would have been more effective.

Built-In LMS Analytics vs External BI Tools -LMS Reporting and Analytics

Native LMS dashboards provide out-of-box analytics tailored to learning data, completion tracking, engagement metrics, basic trend visualization. They understand course structures and learner hierarchies without custom configuration. Business intelligence integrations connect LMS data to Power BI, Tableau, or similar platforms. This enables combining learning data with broader metrics, correlating training with sales performance, analyzing skill development alongside productivity.

Trade-offs: native analytics are simpler but constrained to learning-centric views. BI integrations offer richer cross-functional analysis but introduce data visualization complexity, governance overhead, and data mapping challenges. LMS user IDs must align with HRIS records, course categories must map to competency frameworks.

Not every organization needs BI integration. Those attempting it often underestimate the governance and technical overhead required.

Advantages and Limitations by Analytics Depth

Basic Reporting

Advantages: Simple to implement, audit-ready, low overhead, clear accountability

Limitations: Shallow insight, weak learning impact signals, limited predictive value

Advanced Analytics

Advantages: Pattern discovery, skill trend visibility, cross-cohort comparison

Limitations: Interpretation burden, governance dependency, resource intensive, context required

Predictive Analytics

Advantages: Early risk signals, proactive intervention, resource optimization.

Limitations: False positives, ROI uncertainty, model drift, ethical concerns.

Contextual Suitability – Where Each Data Type Fits

Highly regulated industries (healthcare, finance, manufacturing) prioritize compliance reporting, documentation that training occurred, certifications remain current, and audit trails are defensible. Basic reporting with strong audit depth typically suffices. Upskilling programs require advanced analytics, skills gap analysis, proficiency tracking, capability development trends to measure whether training translates to capability. Distributed workforces (retail, field services) benefit from engagement metrics and behavioral analytics, identifying where mobile learners drop off, which formats work in constrained environments. Executive reporting in large enterprises often integrates LMS data into business intelligence ecosystems like SAP SuccessFactors or Cornerstone where learning aligns with talent management frameworks.

Common Misinterpretations in LMS Analytics

- Completion ≠ Competence: A 95% completion rate proves training was finished, not that learners absorbed information or can apply it effectively.

- Engagement ≠ Effectiveness: High time-on-task might indicate engagement or confusing navigation and poorly designed content. Engagement metrics are ambiguous without context.

- Dashboards ≠ Decisions: Deploying sophisticated dashboards doesn’t guarantee better decisions if stakeholders lack analytical literacy or if metrics don’t align with strategic priorities.

These misinterpretations persist because they’re convenient, reporting completion is easier than measuring competence.

The 30-Day Roadmap: From Decorative Reporting to Functional Analytics – LMS Reporting and Analytics

Most organizations don’t lack data, they lack a systematic approach to extracting value from it. Here’s the tactical roadmap we’ve used with 20+ organizations to transition from compliance reporting to strategic analytics:

Week 1: Audit Your Current State

Action: Document every LMS report currently generated

- Who receives it?

- How often?

- What decisions has it informed in the last 90 days?

Expected finding: 60-80% of reports are generated but never used. Eliminate these immediately, they create “noise” that obscures meaningful signals.

Deliverable: A 1-page inventory of reports that stakeholders actually reference when making decisions.

Week 2: Identify Your Highest-Value Question

Action: Interview 5-7 key stakeholders (compliance, L&D, business leaders, HR) and ask: “If you could answer one question about our learning programs that you can’t answer today, what would it be?”

Common responses:

- “Which training programs actually reduce safety incidents?” (operations)

- “Does leadership training improve retention of high performers?” (HR/talent)

- “Are we training the right people on the right skills?” (executives)

Deliverable: Prioritized list of 3-5 high-value questions ranked by stakeholder importance and data feasibility.

Week 3: Map Data Requirements

Action: For each high-value question, identify:

- What LMS data is required?

- What external data sources are needed? (HRIS, performance systems, incident reports)

- Do we have access to this data?

- What governance approvals are needed?

Expected finding: Your highest-value question requires data you don’t have clean access to, usually performance or business outcome data.

Deliverable: A data requirements matrix showing gaps between desired insights and current data availability.

Week 4: Implement One High-Impact Dashboard

Action: Choose the highest-value question where data IS available and build one focused dashboard that answers it.

Example – Safety Training Effectiveness:

- Metric 1: Time between training completion and first incident (by department)

- Metric 2: Incident rate comparison: trained vs. untrained employees

- Metric 3: Refresher training compliance rate among incident-involved employees

Don’t: Build a comprehensive 40-metric dashboard that overwhelms users. Do: Build a 3-5 metric dashboard that clearly answers one important question.

Deliverable: A single-purpose dashboard that stakeholders reference weekly to inform actual decisions.

How to Think About Choosing What Data Matters: LMS Reporting and Analytics

- Who consumes this data? Compliance officers need different metrics than business leaders. Analytical investments should match consumer sophistication.

- What decisions will it inform? If data doesn’t change resource allocation or program design, collecting it wastes capacity. Measure what drives decisions.

- Do we have analytical maturity? Advanced analytics require interpretation expertise and governance discipline. Strengthen foundations before pursuing predictive analytics.

- Are we measuring outcomes or activity? Activity metrics (logins, completions) are easier to track but less valuable. Outcome metrics (capability development, performance improvement) are harder to measure but more meaningful.

FAQ

Q1. What is LMS reporting and analytics?

LMS reporting documents learning activities, completion, scores, certifications. LMS analytics examines patterns, engagement trends, skill development rates, proficiency trajectories. Reporting is descriptive; analytics is diagnostic or predictive.

Q2. Why do LMS metrics actually matter?

Depends on priorities. Regulated industries need completion tracking for audits. Upskilling programs need proficiency and time-to-competence metrics. Executives need business impact measures, coverage, readiness, ROI indicators.

Q3. Is completion rate a good metric for LMS analytics?

For compliance tracking, yes, it proves training occurred. For measuring effectiveness, non-completion confirms exposure but not comprehension or application.

Q4. How do LMS analytics improve learning?

By identifying patterns: which content causes drop-off, which questions learners miss, which paths produce faster proficiency. Analytics surface problems that design iterations can address.

Q5. Can LMS data measure ROI?

Partially. LMS data can correlate training with performance improvements. But proving causation requires controlling for confounding variables. LMS data contributes to ROI analysis but rarely proves it definitively.