Why LMS Content Strategy Is More Than Just Uploading Courses

LMS “ghost towns” aren’t caused by bad software. They’re caused by dump-and-run content strategy. If your completion rate is sub-40%, your architecture is the bottleneck, not your learners. The problem is what most teams call strategy: upload courses, assign by department, activate the platform. That’s inventory management. Real LMS content strategy is the architecture that makes content work: learning objective definition, audience segmentation, sequencing logic, skill mapping, and measurement design. It answers why courses exist, who they’re for, in what order, and what “good” looks like when someone finishes. Platforms like SimpliTrain Docebo, TalentLMS, Absorb LMS, and 360Learning provide the infrastructure. Infrastructure without architecture is just storage.

What Is an LMS Content Strategy, Really?

Three terms get conflated constantly. They’re not the same.

- A content library is inventory, organized, searchable, accessible. No structure, no sequence.

- A learning path is a curated sequence leading a learner through a defined progression from A to B, with or without enforced prerequisites.

- A curriculum framework is the overarching design defining what skills exist across roles, how content maps to those skills, and how the system is maintained as content ages.

LMS content strategy requires all three layers. Library without paths produces confusion. Paths without curriculum produce disconnected sequences that don’t add up to skill development. Most organizations operate at layer one while imagining they’re at layer three.

How Should You Structure LMS Courses – Linear, Modular, or Adaptive?

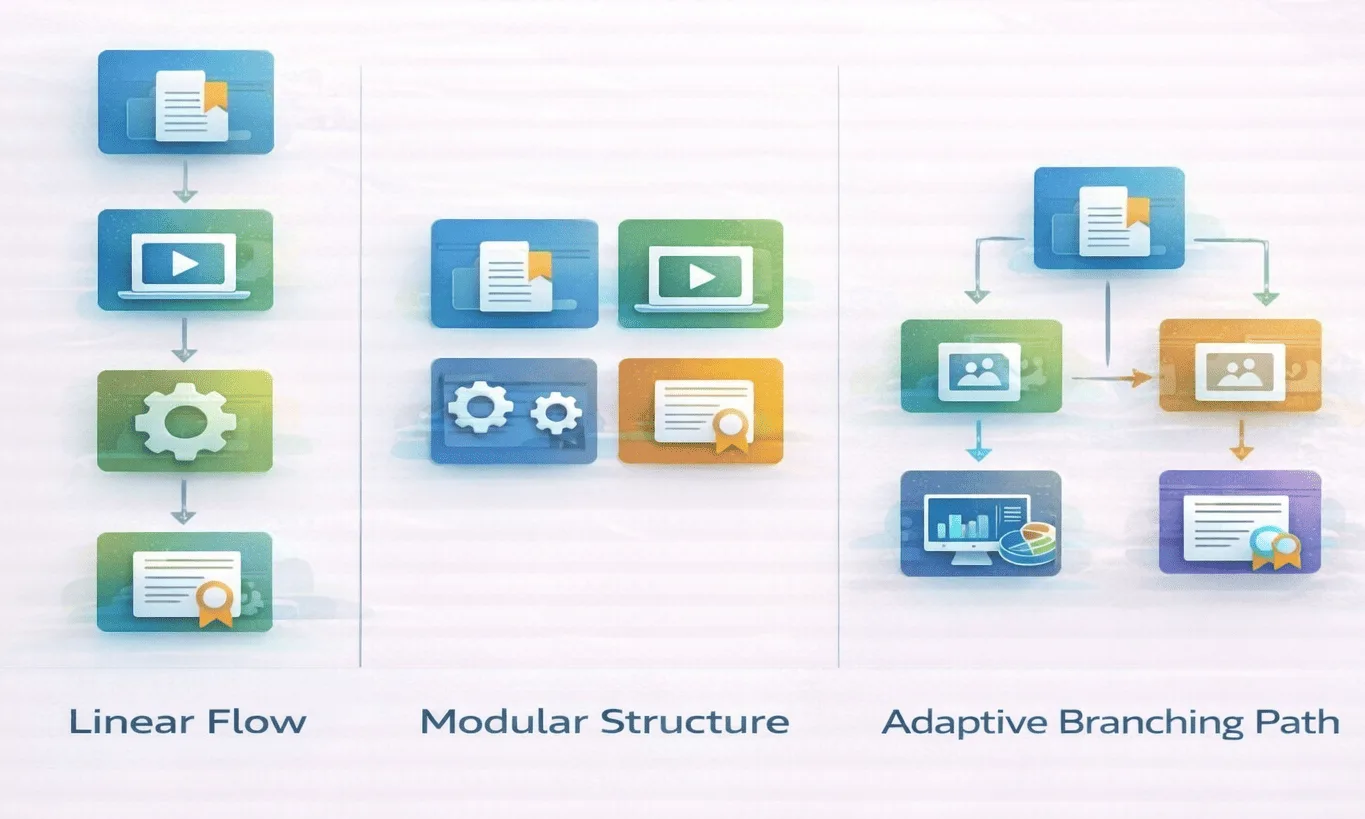

Three structural models. Each makes different assumptions about learners, compliance requirements, and organizational complexity.

1. Linear (Sequential) Structure Fixed sequence, prerequisites enforced, reporting is simple: complete or incomplete. The standard model for mandatory compliance training, clear audit trail, everyone follows the same path. The limitation is rigidity: experienced employees complete remedial content they don’t need.

2. Modular / Chunked Structure Independent modules accessible in any order, reusable across multiple paths, updatable without affecting unrelated content. Flexible access suits self-directed learners. Reporting complexity increases significantly: modular structures require xAPI (Experience API) instead of SCORM to track granular statement-level data, for example, “User watched video X but skipped quiz Y” or “User completed module 3 before module 1.” SCORM only records completion and score at the course level; it cannot report on non-linear navigation through a modular library.

3. Adaptive / Personalized Structure Conditional progression based on assessment scores, role data, or learner behavior. Score 80%+ on a pre-assessment, skip to advanced content. Score below 70%, branch to remedial support. Platforms like SimpliTrain support adaptive automation, but adaptive paths require structured metadata, skill taxonomies, and analytics infrastructure to operate correctly.

Comparison Table: LMS Course Structure Models

| Model | How It Works | Advantages | Limitations | Best Context | Real-World Failure Mode |

|---|---|---|---|---|---|

| Linear | Fixed sequence, prerequisites enforced | Simple reporting, compliance audit trails | Ignores experience variance, throttles advanced learners | Mandatory compliance, regulated industries | Learner fatigue and “click-through syndrome”, completion without engagement |

| Modular | Independent chunks, flexible access | Reusable content, flexible sequencing | Requires xAPI (not SCORM) for meaningful reporting; metadata discipline essential | Product training, skill refreshers, mixed audiences | Metadata neglect renders analytics meaningless; paths become ungovernable at scale |

| Adaptive | Conditional progression based on data | Personalized relevance, efficient for experienced learners | High design complexity, requires platform capability and data maturity | Enterprise talent development, variable-entry certifications | Over-engineered branching logic that nobody maintains after launch |

Competency-Based vs Course-Based Learning Paths -What’s the Real Difference?

- Course-based paths are organized around completion. Finish five modules, receive a certificate, done. Simple to deploy, easy to report. Widely used for compliance training where the requirement is evidence of exposure, not demonstrated skill.

- Competency-based paths are organized around measurable skill development. The path isn’t finished when courses are completed, it’s finished when a learner can demonstrate defined capability through assessments, manager observation, or on-the-job application.

The practical difference: in a course-based model, 100% completion is success. In a competency-based model, 100% completion is irrelevant if assessments show the skill hasn’t transferred.

Docebo’s skill profiles and SimpliTrain’s learning outcomes features map content to competencies explicitly. Moodle supports both depending on configuration. The implementation gap: competency-based design requires someone to define what “proficient in consultative selling” actually means, that’s L&D design work, not platform configuration.

Pros and Cons: Course-Based vs Competency-Based

Course-Based

- Advantages: Fast deployment, clear completion metrics, straightforward compliance reporting

- Limitations: Weak linkage to skill transfer; completion rate can mask zero behavior change

Competency-Based

- Advantages: Direct alignment with performance outcomes, visible skill gaps, role-based development planning

- Limitations: Requires taxonomy design upfront, demands platform capability and assessor time

Microlearning vs Structured Curriculum – Which Fits Your LMS Content Strategy?

Two philosophies. Neither is universally right.

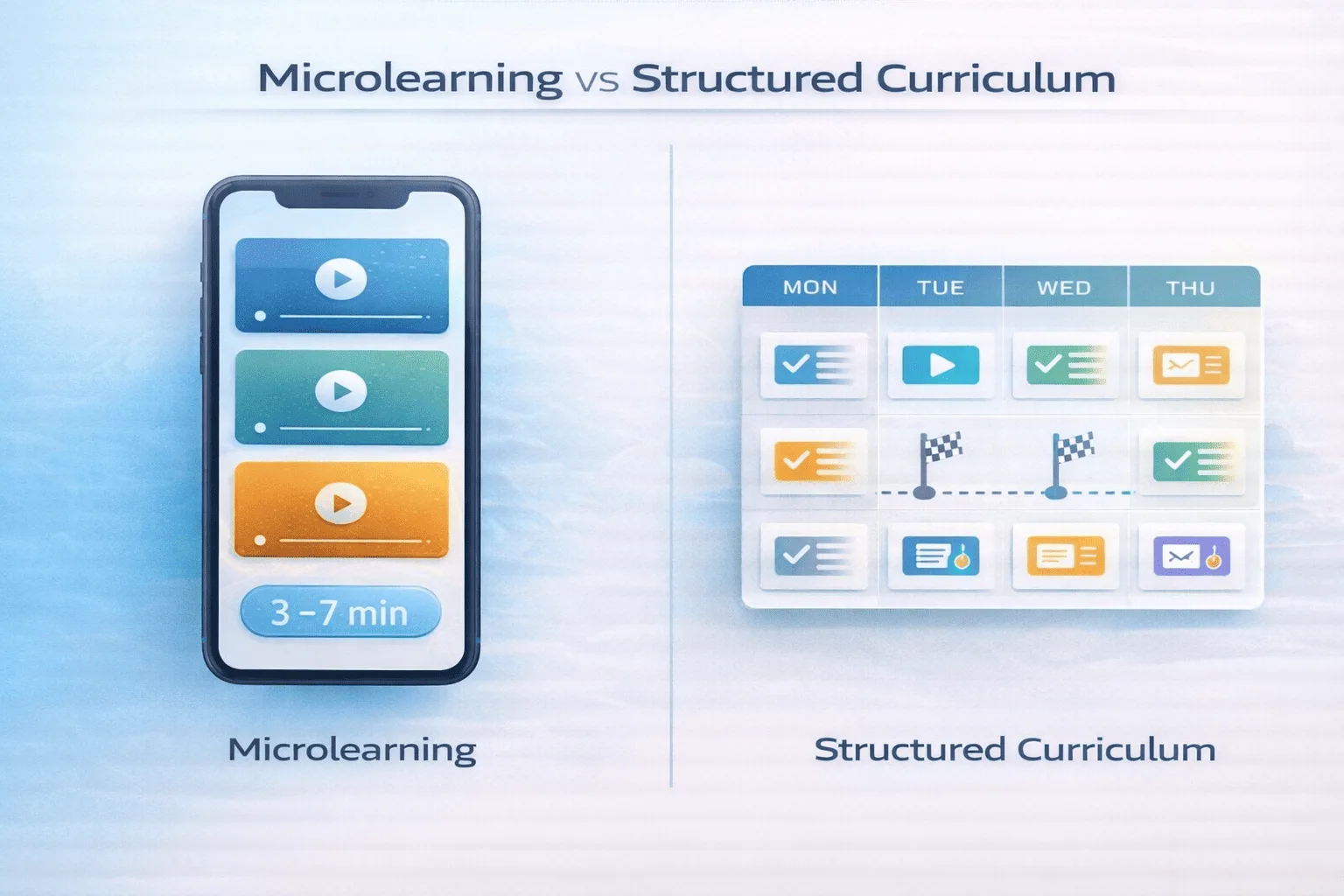

- Microlearning is short, focused modules, typically 3–7 minutes, for single-concept delivery. Mobile-friendly, accessible at the point of need. Suited for deskless workforces and just-in-time performance support. The risk: fragmentation. A library of 200 five-minute modules without sequencing logic isn’t a learning strategy, it’s inventory with a mobile app.

- Structured curriculum is scaffolded progression, modules building on each other over days or weeks. Required for certification programs, complex onboarding, and leadership development. The risk: length. Completion rates drop sharply past 4 hours of total content. Most L&D teams still build 8-hour onboarding programs and wonder why new hires abandon them by week two.

The practical test: what is the learner trying to accomplish, and when? A warehouse associate checking forklift safety procedures needs microlearning. A new sales engineer building product knowledge needs structured curriculum. Many organizations need both.

Comparison Table: Microlearning vs Structured Curriculum

| Dimension | Microlearning | Structured Curriculum | Operational Impact |

|---|---|---|---|

| Time commitment | 3–7 min per module | Hours to weeks per program | Affects scheduling and learner availability |

| Skill depth | Narrow, single-concept | Broad, scaffolded progression | Determines appropriate use case |

| Engagement pattern | Frequent, intermittent | Sustained, progressive | Shapes notification and reminder strategy |

| Content maintenance | High volume, frequent updates | Lower volume, periodic review | Affects content governance burden |

| Assessment style | Knowledge check per module | Cumulative, scenario-based | Determines evidence of learning |

| Reporting clarity | Easy per module, hard across sequences | Clear progression tracking | Affects stakeholder reporting |

From the Field

I’ve seen 40-module onboarding paths result in 60% drop-off by module 5. The path wasn’t bad content, it was a reasonable attempt to cover everything. That’s the problem.

In our experience, the Goldilocks Zone for a daily learning path is 12–15 minutes of total seat time. Not a module. A day. Learners who complete Day 1 at that cadence have significantly higher rates of returning for Day 2. Paths that open with a 45-minute module don’t get a Day 2. The implication for structured curriculum: break your 8-hour onboarding program into a 10-day program with 45-minute daily commitments. Same content. Dramatically different completion. The architecture is the intervention, not the content.

The 2026 Shift: Moving from Static Paths to Generative Learning Nodes

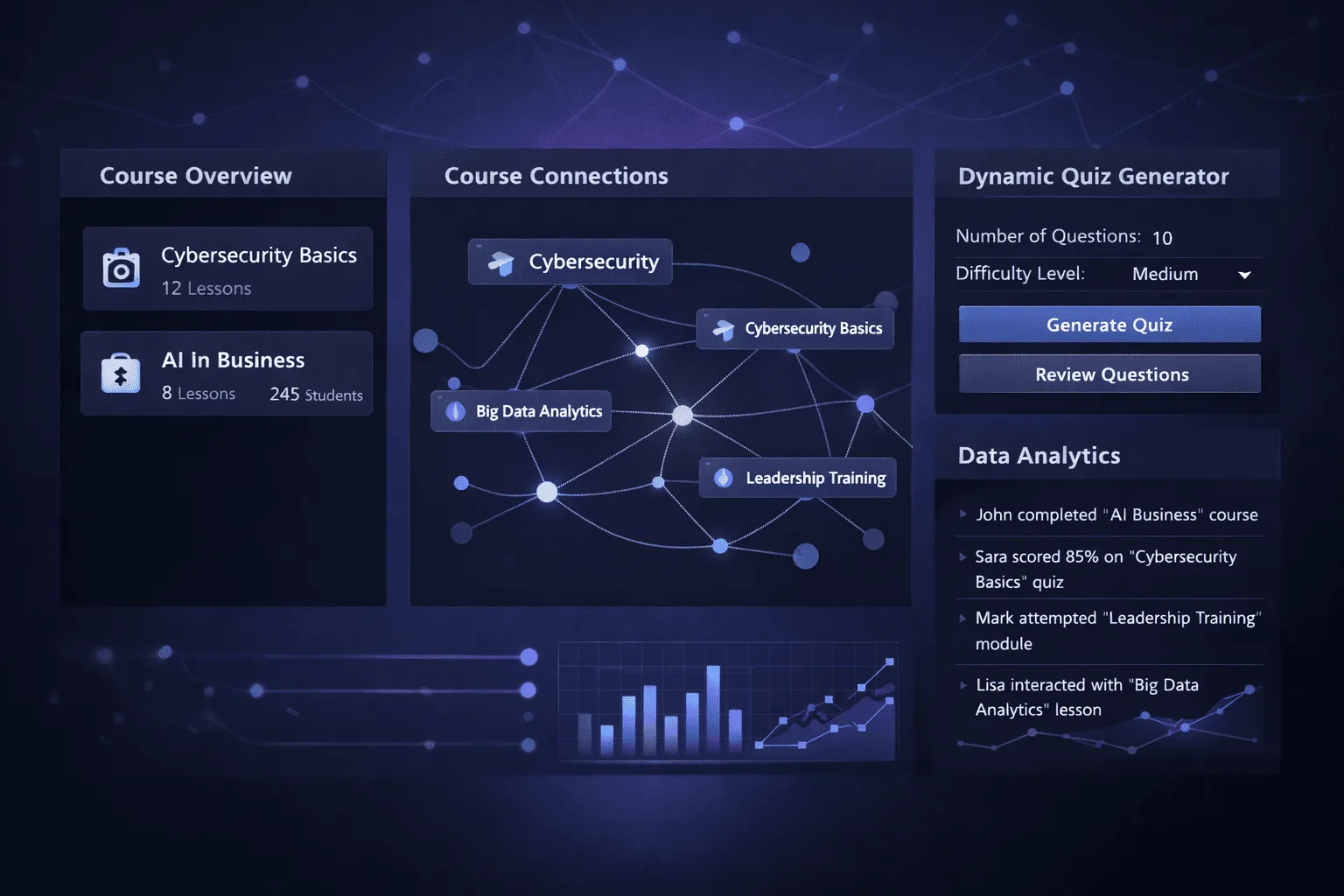

The most significant change to LMS content strategy in 2026 isn’t a new platform feature. It’s the integration of generative AI into the content layer itself.

- Traditional learning paths are static: build once, assign repeatedly, update annually. Generative learning nodes are dynamic, AI augments the path in real time based on learner behavior.

- AI-powered auto-tagging for metadata. Manually tagging 500 courses for skills, roles, and competencies takes weeks and ages out of date immediately. Platforms integrating LLMs can analyze course content and auto-generate metadata tags aligned to a defined skill taxonomy, reducing tagging time from weeks to hours and improving recommendation accuracy by ensuring tags reflect actual content rather than course titles.

- Just-in-time quiz generation for modular paths. Instead of static knowledge checks authored once at build time, AI-generated assessments can produce fresh question variants per learner session, reducing “answer memorization” patterns common in repeatedly assigned compliance training. Learners who’ve completed a module three times see different question framings, testing understanding rather than recall.

- Adaptive content gap detection. AI analysis of xAPI statement data can identify which learners consistently skip or fail specific modules, triggering automatic content recommendations or manager alerts, without manual reporting review.

The constraint: generative features require clean metadata, structured xAPI data, and governance decisions about what AI is authorized to modify. AI augmentation of a poorly tagged content library produces better-tagged garbage. The strategy layer must precede the AI layer.

How Do LMS Platform Features Influence Your Content Strategy?

Platform capability doesn’t just support your strategy. It shapes what’s possible.

- Automation rules determine whether adaptive paths are feasible. LMS vendors such as SimpliTrain and Docebo have learning plan automation triggers content assignments based on role changes or assessment scores. Without automation, adaptive paths require manual administration, which doesn’t scale.

- Metadata tagging determines whether personalization is accurate. Robust tagging enables skill-based recommendations; weak metadata forces manual path assignment regardless of learner history.

- Analytics dashboards determine whether iteration happens. Absorb LMS’s module-level reporting identifies where completion drops and which content correlates with performance change. Without that data, strategy runs on intuition.

- Collaboration features change the design model. 360Learning builds peer engagement into path architecture, not as an add-on, but as a core structural assumption. The principle: design content strategy within your platform’s actual capability. Strategy built on features you don’t have yet creates implementation debt.

The LMS Strategy Audit: 8 Non-Negotiables

Use this before building any new path, or diagnosing why an existing one isn’t working.

[ ] Skills defined before content selected. If you can’t name the specific skill each module builds, the path isn’t strategy, it’s a content dump.

[ ] Structure matched to compliance, depth, or agility goal, not all three. Linear for compliance evidence. Modular for skills flexibility. Adaptive for talent development. Mixing models without purpose produces paths that excel at nothing.

[ ] Portability vs personalization decision made explicitly. Modular reuse requires different metadata infrastructure than adaptive personalization. This decision shapes your tagging architecture.

[ ] Metadata maturity assessed before adaptive design begins. Personalization runs on metadata quality. Weak tags produce irrelevant recommendations. Audit your current tagging before designing systems that depend on it.

[ ] Daily seat time designed at 12–15 minutes maximum. Not ideal time. Actual time. Paths designed for more than learners can realistically give will fail by design.

[ ] xAPI confirmed (not just SCORM) for any modular or adaptive path. SCORM cannot track non-linear navigation. If your tracking standard doesn’t match your path structure, your analytics are incomplete.

[ ] Manager reinforcement built into the design, not bolted on. Identify the specific 1:1 touchpoint, performance review question, or team ritual that references path progress. If it doesn’t exist, add it before launch.

[ ] Success metric defined before building begins. Completion rate, assessment score, time-to-competency, or on-the-job performance change. Choose one primary metric. Measure it at launch and 90 days later. The metric you choose determines what behavior your strategy actually optimizes for.

FAQ

Q1. What is the ROI difference between course-based and competency-based learning paths?

Course-based paths produce measurable completion ROI, you can prove everyone took the training. Competency-based paths produce performance ROI, you can prove skill levels changed. The business case difference: completion evidence satisfies auditors; competency evidence satisfies CFOs asking whether training spend is changing behavior. Organizations with strong L&D business case requirements increasingly need competency-based measurement, even if the delivery mechanism looks similar.

Q2. How do I convince stakeholders to move away from 8-hour linear onboarding?

Bring completion data, not philosophy. Pull the drop-off curve from your current onboarding path, most organizations find 50%+ of new hires don’t complete past module 3 of a long linear program. Reframe the proposal: “We’re not reducing content, we’re distributing it across 10 days at 45 minutes per day.” Same total hours, dramatically higher completion.

Q3. What is the difference between a curriculum and a learning path?

A curriculum is the broader framework defining skills across roles and how content maps to them. A learning path is a structured sequence within the LMS delivering a specific portion of that curriculum to a specific audience.

Q4. Can LMS learning paths be personalized?

Yes, depending on platform capability and data maturity. Adaptive paths require skill taxonomy, xAPI-compatible tracking, metadata tagging, and automation rules. In 2026, AI-augmented platforms can auto-tag content and generate adaptive assessments, but only if the underlying data infrastructure is clean. Simpler platforms approximate personalization through role-based path assignment.