A Learning Record Store (LRS) is often described as “a system that stores xAPI statements.” While technically correct, that definition barely scratches the surface of what an LRS actually represents inside a modern learning ecosystem. In practice, an LRS sits at the center of distributed learning data architecture. It exists because traditional LMS reporting was never designed to capture learning that happens outside structured, browser-based courses. As organizations adopted mobile learning, simulations, offline training, and experiential programs, a new data layer became necessary. This article explains not only what an LRS is, but how it works architecturally, where it creates value, and where implementation failures most often occur.

What a Learning Record Store Actually Does

At its core, a Learning Record Store receives, stores, and returns learning activity data in the form of xAPI (Experience API) statements. An xAPI statement follows a structured model:

Actor → Verb → Object

“Maria completed Safety Module A.”

But unlike LMS tracking logs, these statements are transmitted as structured JSON objects through RESTful API endpoints. A conformant LRS must:

- Accept xAPI statements

- Validate them against the specification

- Store them without altering semantic meaning

- Allow authorized systems to query and retrieve them

Technically, most modern LRS systems use flexible storage structures (often NoSQL-style architectures) to handle high-volume JSON data efficiently. This flexibility enables event-level tracking across systems, not just course completion events. Importantly, an LRS does not deliver content. That remains the role of the LMS. The LRS focuses purely on learning data capture and retrieval.

Why the LRS Emerged: The SCORM Limitation

To understand the need for an LRS, you must understand the limitations of SCORM-based tracking. SCORM was effective for:

- Browser-based course tracking

- Completion status

- Assessment scoring

However, SCORM struggled with:

- Offline environments

- Mobile applications

- Simulation engines

- Real-world experiential learning

The introduction of xAPI solved this limitation by allowing any learning system to send structured statements to a central repository, the LRS. In other words, the LRS exists because learning no longer happens in one place.

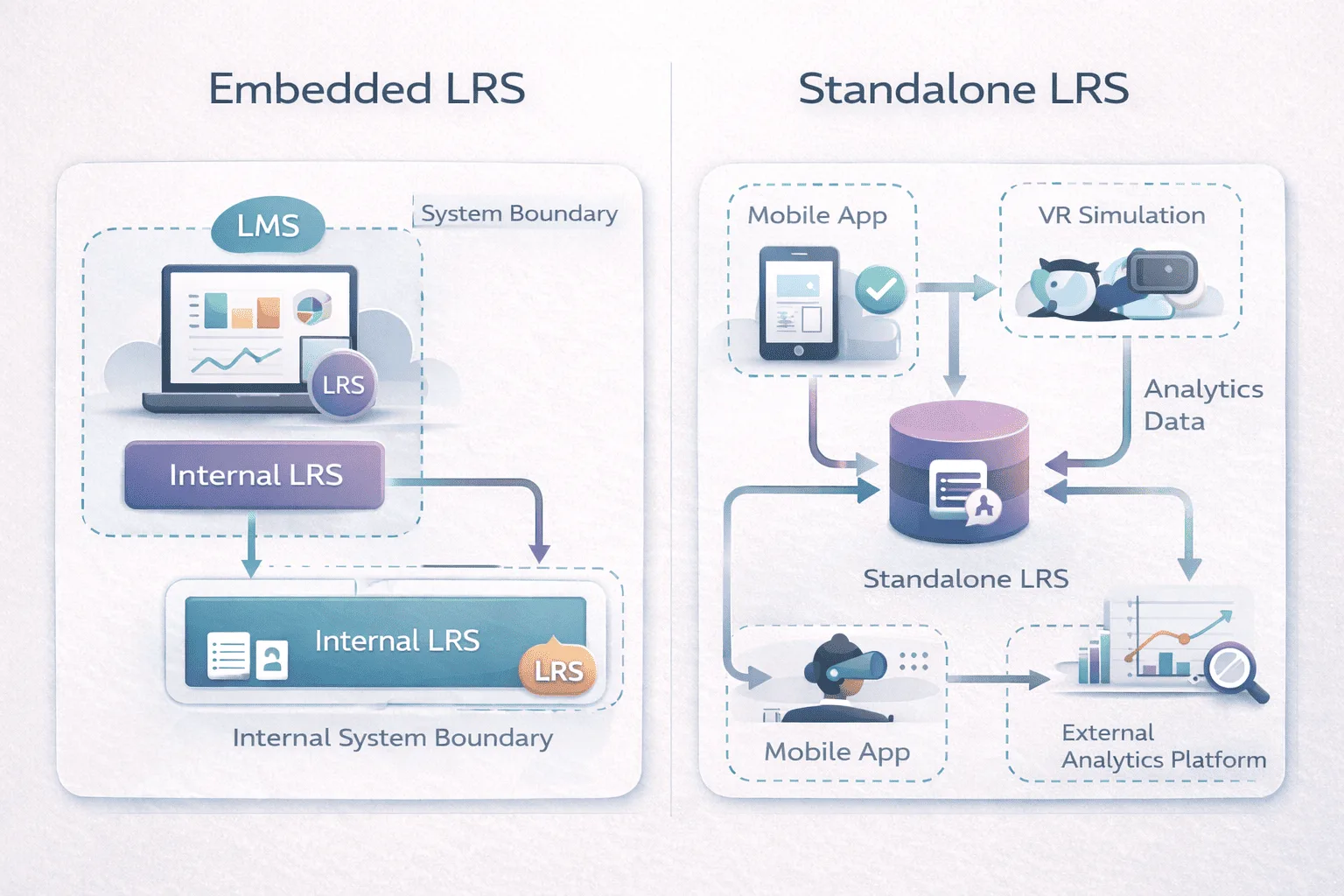

Choosing an Embedded vs Standalone LRS for Enterprise Learning Ecosystems

One of the most important architectural decisions is whether to use an embedded or standalone LRS.

Embedded LRS

An embedded LRS exists within an LMS or learning platform. It typically tracks xAPI data generated inside that ecosystem. Advantages include:

- Faster implementation

- Reduced integration complexity

- Simplified governance

Limitations include:

- Restricted cross-platform visibility

- Limited ability to aggregate external data

Standalone LRS

A standalone LRS operates independently and accepts statements from multiple sources, LMS platforms, mobile apps, simulations, or other learning tools. Advantages include:

- Centralized learning data hub

- Cross-system interoperability

- Greater analytics flexibility

Limitations include:

- Increased integration complexity

- Higher governance requirements

- Greater architectural oversight

Comparison Overview

| Dimension | Embedded LRS | Standalone LRS |

|---|---|---|

| Integration Scope | Platform-bound | Multi-system |

| Deployment Complexity | Lower | Higher |

| Data Centralization | Limited | Strong |

| Analytics Flexibility | Moderate | High |

| Governance Overhead | Moderate | Significant |

The distinction is less about superiority and more about ecosystem scale.

LRS Governance Best Practices to Prevent xAPI Verbal Drift

In my experience auditing xAPI deployments, technical installation is rarely the issue. Governance is. Because xAPI allows flexible verbs and object definitions, organizations often underestimate the importance of vocabulary control. For example, if one developer uses the verb:

- “completed”

And another uses:

- “finished”

For the same activity, reporting queries fragment. Dashboards return inconsistent results. Analytics become unreliable. This phenomenon, often called verbal drift, is one of the most common LRS governance failures. Before deployment, organizations should define:

- Controlled verb vocabularies

- Actor identification standards

- Object naming conventions

- Version control policies

Without this framework, an LRS becomes a high-volume archive of inconsistent statements rather than a reliable analytics engine.

Storage-Focused vs Analytics-Enabled LRS Models

Another distinction in the market is between pure storage LRS systems and analytics-enabled platforms.

Storage-Focused LRS

These systems prioritize standards compliance and data capture. They expose APIs for querying but rely on external BI tools for visualization. Best suited for:

- Organizations with established analytics infrastructure

- Teams already using tools like Power BI or Tableau

- Data engineering-mature environments

Analytics-Enabled LRS

These include dashboards and built-in reporting tools. Best suited for:

- Teams without dedicated data analysts

- Organizations needing faster visibility

- Smaller L&D departments

Decision Matrix

| Scenario | Storage-Only LRS | Analytics-Enabled LRS |

|---|---|---|

| Mature BI Team | ✔ | Optional |

| No Internal Analytics Capability | Risky | ✔ |

| High Data Volume | ✔ | Depends on scalability |

| Basic Compliance Tracking | Overkill | ✔ |

The correct model depends on internal technical maturity, not feature lists.

Data Volume and Performance Considerations

xAPI’s flexibility allows granular event capture. This can be powerful, but also problematic. In simulation-heavy environments (for example, VR training), a single session can generate thousands of statements. If batching and filtering strategies are not designed properly, LRS performance may degrade. Common operational issues include:

- Statement latency under burst load

- Misconfigured authentication tokens

- API throttling

- Storage cost escalation

An LRS should be treated as infrastructure, not simply a feature.

When an LRS Adds Strategic Value

An LRS is most impactful when learning experiences span multiple environments. High-value contexts include:

- Simulation-based training

- Mobile field workforce programs

- Informal learning tracking

- Cross-platform certification reporting

- Blended enterprise ecosystems

In contrast, organizations running only structured compliance courses within a single LMS may not require the complexity of a standalone LRS. The decision is architectural, not aspirational.

Common Misconceptions

- An LRS replaces an LMS.- It does not. The LMS manages content and enrollment. The LRS manages event data.

- More data means better insight. -Unstructured or poorly governed data reduces insight quality.

- An LRS automatically improves analytics. –Analytics depends on taxonomy design and query discipline.

Learning Record Store Explained: The Structural Takeaway

A Learning Record Store is a standards-based xAPI data repository designed to unify distributed learning activity across systems. It becomes powerful when:

- Multiple platforms generate learning data

- Cross-system reporting is required

- Offline or experiential learning must be captured

It becomes problematic when:

- Governance frameworks are absent

- Data vocabulary is inconsistent

- Implementation treats storage as insight

The real value of an LRS is not in its definition. It lies in its architecture and discipline. When properly governed, it forms the backbone of modern learning analytics. When poorly structured, it becomes an expensive data archive.

FAQ

Q1. Why are my xAPI statements not appearing?

Most often due to incorrect endpoint URLs, token authentication errors, or malformed JSON structures.

Q2. Why is reporting inconsistent?

Usually caused by uncontrolled verb variation or inconsistent actor identification fields.

Q3. Can an LRS track offline learning?

Yes. xAPI statements can be cached locally and synced when connectivity is restored.

Q4. Is LRS required for compliance tracking?

Not always. Many compliance programs operate successfully within LMS-only reporting frameworks.